I've been quite busy over the last month or so and haven't really had the time to put a proper post together. To be fair, there hasn't been much of note to really talk about. Sure, I spoke about the RX 6500 XT but there was no real point in covering the RTX 3050 because it was unsurprising and the industry reacted accordingly... So, in light of the hubub surrounding the RDNA 3 rumours, I thought I'd pull together my previous data sets and look at them from a different light.

Previously, I've looked at the relative value of GPUs over the last ten years; how the inter- and intra-generational performance, price and whether and when upgrades made sense. What I didn't look at and what many people have not looked at is the trend in performance we're getting per year in GPU class releases and where that may be taking us...

Performance...

Here's the simplistic reading of this situation: things are getting better, they're getting better all the time...

That's a pretty trite and obvious statement, though, so let's break it down into real numbers and graphs:

What's startlingly clear to me is how closely both AMD and Nvidia have hewn to each other's performance. Yes, okay, I normalised the performance to Nvidia's classes for AMD's cards but that really only affects the price points of each entry, not the actual available performance per tier in each generation. There are gaps on AMD's side, notably in the high-end in those years where AMD decided they didn't want to compete or, perhaps more honestly, when they felt they couldn't compete based on either cost or efficiency (a smart decision in many ways!).

Paul, over on NotAnAppleFan, is fond of saying that transistors are a direct indicator of GPU performance. I, personally, haven't done that analysis but I do find it continually interesting that Nvidia's architectures, power-hungry as much as people are wont to decry them for being, are competitive with AMD's architectures that exist on more advanced process nodes, and at higher clock speeds. I don't hear or read people talking about that so I'm going to assume that Paul is correct since I see that TechPowerUp has the RX 6900 XT at 94% performance of the RTX 3090 which correlates exactly to the 26.8/28.3 million transistors ratio between the two cards... which, quite frankly is a bit surprising!

But, let's move on to the actual reason for this post.

Increase in performance...

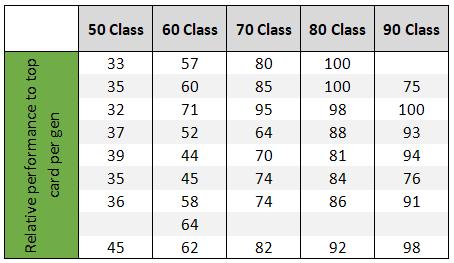

Like I said, I decided to re-jig my numbers and arrange them in a different manner to how they have previously been analysed. Originally, I was trying to look at how performance per gen is slowing down because you can see that in the data, even if it's not clear from any of those graphs above, except for the 50 class cards... However, I struggled to represent that in an easy to read graphical form. Finally, I settled on the idea of "performance increment per gen, per year". Which, essentially means how much performance improvement you got, per class, per gen, divided by the number of years it took in between generations.

And here's that data:

The interpretation of this data is open to several avenues of thought:

First off, across the board, in every class we are seeing a slowly decreasing amount of performance gained per year. Although this is "equal" sort-of, across class levels, this obviously affects lower class cards worse than upper class cards because of the disparity of performance between the tiers. Stagnation at the lower end is worse than stagnation at the upper end because game system requirements are still increasing, year on year...

Previously, I said it was better to go high-end or low-end in order to get your money's worth when buying a GPU, essentially ignoring the mid-range graphics cards. I still stand by those sentiments and I think that these numbers back that assertion to a good degree.

The advancement per year in 50 class cards performance is essentially plateauing, and the higher end cards in the 80 and 90 classes are slowing down as well, to a lesser degree. Now, you may be tempted to think that this is the polar opposite of what I just said, but remember, I just told you that these curves are relative to the level of performance in each class.

What we're saying here is that your 50 class purchase will not be eclipsed by the next generation card as quickly. Also, those slight curves for the 80 and 90 classes are still drawing away higher from the stronger curves in the 50 class cards, meaning that the relative absolute (or real world) performance difference of cards in each of those classes is getting larger over time: i.e. the performance differential is increasing because 80 and 90 class cards are not only increasing by a larger margin per generation but also being released more regularly as well.

To put it in another, less complicated way: If you buy a 50 class card, because of the lag between hardware releases and slowdown in absolute performance increases per generation, the next (and maybe even next, next) gen card will not be that much more performant (if the current trends continue) and will take longer and longer to be released. I think that these trends are likely to continue based on wafer cost, rarity, parts crisis and consumer demand.

The big problem we face as consumers is that the performance of GPUs is increasing based on resources, not on efficiency or per resource unit performance - which I'll get to below in the next section.

In the same way, if you buy an 80 or 90 class card, their performance increase per year is slowing as well. Sure, the performance of the next and next, next gen cards will be relatively higher but, proportionally, it will not be that much greater. You are not losing out by staying with your current card because even if there's a huge generational leap in performance when considered over the launch cadence of the generations themselves the cards hold their value quite well, meaning that you'll be able to upgrade more cheaply to the following generation, if that's your preference.

This also depends on the resolution and refresh rate of your monitor: if you're trying to do 4K 120fps then you'll see larger differences, at 1440p or 1080p, you'll feel less of a need to upgrade sooner.

The converse is true for the 60 and 70 class cards.

|

| The performance per tier of Nvidia cards is squashing upward towards the upper tiers... |

I would summarise those tiers of performance as basically a linear increase per year. This is actually bad for consumers because it means two things:

- The card you bought this gen will be supplanted by a card that performs much better next gen.

- The performance of these tiers is actually edging towards that of the class above them, which will necessarily increase their value in the eyes of the manufacturers.

These two factors are terrible for buyers, they mean that your mid-range card will devalue much quicker than the two extremes of performance and it means that the mid-range is essentially squashing up against the higher tiers.

I have said on other platforms that I felt that each card from Nvidia should have been a tier down. i.e. An RTX 3070 should have been the RTX 3060 Ti, etc. The reason for this is the actual game performance of each of these cards in modern titles - it's not that great, when taking into account higher resolution screens and higher refresh rate screens... However, looking at the numbers in the table above, I can see that Nvidia (and therefore AMD, who are matching their performance tiers) are already pushing the relative performance of each tier to their most performant card per generation.

But how does this all impact the next gen cards?

|

| The next gen cards, as rendered by a PC hardware enthusiast... (heavenly glow not included) |

The future...

I've read a lot of leaks regarding RDNA 3/Ada Lovelace (or whatever it will be codenamed) and, quite frankly, a lot of them are nonsensical to me. I don't believe the 2-3x gaming performance claims. I think that the above analysis also adds credence to that prediction. Having such a great increase in performance gen on gen is unheard of and also out of line with the trend of advancement over the last ten years.

We have averages of 1.42x the performance of the 90 class cards, gen on gen... with an all time high of 1.66x. Given the costs associated with producing those high end cards, and also taking into account the fact resources are not scaling linearly in the current GPU architectures, then these cards will be insanely priced but also not double or triple the performance in real world gaming terms. In FP32 compute, maybe*... but the whole point is that these are meant to be gaming cards.

*Looking at various gaming comparisons, the RTX 3080 is around 1.53x at 1440p and 1.71x at 4K of the RTX 2080 (if I remember correctly, it's lower at 1080p). However, the difference in FP32 is 2.96x. That's only a 50% gain at 1440p compared to actual increase in compute resources. This is a clear trend we will see going forward - more resources thrown at the problem but with lower efficiency in actual game performance output. This will also impact RDNA 3 as well...

This is the problem both Nvidia and AMD are facing (and one that Intel will suffer, too). No one is creating better architectures, they're just scaling outward in resources - that's the Dual Compute Unit complexes which AMD can't decide are WGP or not, Intel's Execution Units (which they also don't want to call EUs), and Nvidia's SMs... which they're fine calling SMs.

You can argue that RDNA is a great advancement on Vega but only really in terms of efficiency. I estimated that the Steam Deck (8 RDNA2 CU) would be approximately 2x the graphics performance of an Aya Neo (6 Vega CU) which matches well the observed 1.5x the performance of a Ryzen 9 4900HS (8 Vega CU) that Digital Foundry showcased. That's only a ~20% increase per CU/frequency between the architectures... for an architecture that was released three years ago versus one that released five years ago.

Personally, I think it's fine.. but not amazing, and it comes back to the problem we have with the low-end discrete GPUs - without increasing the quantity of available resources, we're not getting huge performance increases. If we break down the performance per year in this market segment, we're talking about APUs with graphical performance that are broadly equivalent to those available in 2018-2019 with the 2400G/3400G Vega 11 designs... though, as I said, much more energy efficient.

And that's not impressive at all. We're essentially standing still...

No comments:

Post a Comment