|

The RTX 4070 is an interesting card. $100 more expensive than the 3070 on paper but cheaper than most people were probably able to purchase their 30 series card during the period of late 2020 through to just before the release of the 40 series in 2023.

Certainly, I paid slightly less than MSRP on the base model and that was around €50 cheaper than I paid for my 3070 when, in desperation, I just bought the first card I could get my hands on because I didn't have a graphics card.

However, the 40 series cards are almost literally languishing on store shelves due to their high prices and lacklustre performance increases over their 30 series counterparts, with the notable exception of the RTX 4090.

With that in mind, I want to focus on that step forward in performance and look at how the RTX 4070 improves on the 3070 on a mid-range system.

Test setup and Introduction...

The system in question:

- Intel i5-12400

- 16GB DDR4 3800 CL16 Patriot Viper 4400

- Aorus B660i

- WD Blue 570 1 TB

- RTX 3070 Zotac Twin Edge OC

- RTX 4070 MSI Ventus 2x

Sure, there are a lot of reviews that look at the absolute performance of graphics cards by pairing them with top tier computer systems but it is a known fact that people on lower-end hardware might not expect experience quite the level of gains observed in those reviews.

This is due to the various overheads that are present in software, particularly for Nvidia GPUs, and it has been noted by outlets such as Hardware Unboxed that AMD GPUs have a performance advantage on lower-end CPUs.

The stock expereince is one point of view. However, something I've been tentatively dipping my toes into over time has been undervolting/power limiting and overclocking. In that vein of thought, one of the main points from all the reviews I've seen has been highlighting how the RTX 4070 is effectively an RTX 3080 but at ~120 W lower power requirements.

That, in and of itself, is impressive but all the 4070s I can find for sale/review have a single 8-pin power connector and a hard 200 W power limit on most reference designs. There are exceptions to this power limit and I was able to find cards allowed up to a 216 W limit, with the Nvidia founders edition having 220 W and the MSI Gaming X Trio having a much higher power limit of 240 W*. This means that, quality of silicon aside, the potential to overclock most models of this card is minimal at best - potentially - in comparison with it's predecessor, the RTX 3070 which had many models able to supply 250 W and even one up to 330 W from the stock power limit of 225W.

*Both of these latter cards use the 12VHPWR connector

So, how much of the gap can be closed by an overclocked 3070 and how much can the gap be extended, in kind, by an OC'ed 4070?

I'll get into the power and clock scaling of the 4070, next time. So, let's just look at the overclocked and power limited results from each version of the card I own. It is important to note that I am using an optimised overclock - reducing the power limit to 90% on both cards. This actually reduces the amount of performance gain by around 2% on the 4070 and between 2 - 4% on the 3070 from what is actually possible on my specific cards (and with my specific hardware setup) but I think this allows for wiggle-room on quality of silicon, card power and user skill to give a generalised picture.

The Tests...

I have two types of test - canned benchmarks and custom runs. The difference here is that for the games where I'm using my own custom data, I'm adding in extra information such as the %GPU utilisation, the GPU power draw, largest frametime spike and the number of frametime excursions above 3 standard deviations from the average frametime during the run.

This last metric is a modification of what I was doing last time, when I was defining a deviation of at least 8 ms from the prior frametime as a visible stutter - following on from GamersNexus' methodology. My methodology allows for the change in user experience with scaling of performance*. It could lead to being a bit deceptive, though. If the standard deviation of the data is quite small, there is a chance that the number of excursions will be over-represented.

*i.e. An 8 ms deviation from a 16.66 ms average is more noticeable than the same deviation at an 33.33 ms average.

The other metrics I will include are relatively self explanatory. So, let's get on with the show!

|

Starting with the old stand-bys: Unigine Heaven isn't so suitable for testing modern graphics cards as I've seen that it's less demanding on the functional hardware within the newer GPU designs and is very core clock frequency sensitive - so it's relatively easy to "make the numbers go up". Superposition uses more of the silicon on the chip with its screen space ray traced global illumination but is still quite easygoing on modern mid-range and higher end GPUs. While my scores aren't anything to write home about (I'm somewhere in the 800 - 900th range with the above Superposition results) it's clear that the RTX 40 series silicon can clock very high, very easily.

I will make a note here that on the reference model of card there is no way to increase the power limit - I am unable to go past 200 W (100% limit). Additionally, there is minimal ability to adjust the core voltage - my card will not go above or (stably) below 1.1 volt (unlike some others). Additionally, I am not even able to get my card to heat up above 69 degrees Celcius. The only conclusion I can make is that the card is power and voltage limited to such an extent that there is minimal headroom to overclock. The best score I've been able to obtain in Superposition has been 10202 pts, which is really not that much higher

|

|

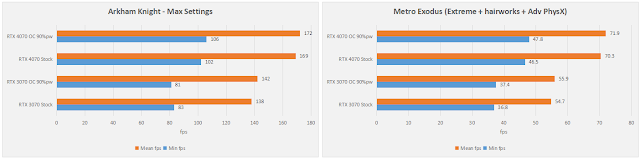

Going through the other canned benchmarks, we're looking at around a 20 - 30 % performance increase (which is pretty typical for the RTX 3080/4070 over the RTX 3070). What is most impressive is the fact that the card is doing that at whilst saving around 20% of the power use. The only issue here is that these are not modern titles and none of them involve real gameplay. So, let's take a look at those real-world scenarios...

First up, there's Hogwart's Legacy. This manual benchmark is a run around Hogsmeade on the latest patch as of 06/05/2023. The performance is much improved since the launch, though I am also testing with mostly only high settings outside of materials and textures - which are set to ultra. You can see that GPU utilisation is quite low - even for the 3070. There are also small stutters related to loading of data onto the GPU and we can see that there are more of these experienced by the user of the 3070, even if the average fps is only very slightly increased.

It's clear to me that we are slightly CPU bottlenecked, here. While lower amount of VRAM on the 3070 is the cause of the increased amount of stutters (hence the improvement in the minimum fps when memory is overclocked!).

|

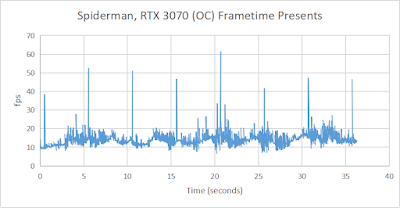

Next, we have Spider-man, using high settings. Once again, we're in quite a similar situation to that in Hogwart's - the game is very heavy on the CPU but one interesting facet is that the RTX 3070 struggled a bit when overclocked and I am at a loss to explain why*. The frametime graph shows a consistent stutter so I am inclined to throw this result out as being affected by something going on in the OS/background tasks - unfortunately, I have not had the time to re-re-install the 3070. However, best case scenario, we are looking at equivalent performance between all four cards, only at a much lower power draw (due to the low utilisation) of the 4070.

|

| Apologies - I forgot to include this chart when I posted this review... |

|

| The RTX 3070 OC result was marred by a consistently spaced system interrupt during testing of Spider-man... |

*[Update 24/06/2023] It seems that there was, indeed, a resource issue occurring during the test. From a similar observation made during Igor Wallossek's VRAM testing, it seems most likely that there was contention between the game and another programme for VRAM utilisation.

Following that, we have The Last of Us. This release has also been marred by terrible performance at launch, some issues of which have since been resolved (such as inappropriate LOD/MIP level on assets when selecting medium and low quality textures). While the increased graphical grunt of the 4070 is giving a small boost to the average, the minimum fps are entirely influenced by the incessant stutters and poor frametimes that are experienced by the user in this title. As a result, at this resolution my system is entirely CPU bound.

|

|

Finally, we take a look at a Plague Tale Requiem. Taking a turn around the busy town scene, not only can we see a small performance improvement when moving to the 4070, we also observe that the game delivers frames exceptionally well, across the board.

|

Conclusion...

And so ends our first little foray into mid-range gaming and we can draw an important conclusion here - while top-of-the-line CPUs and new systems with DDR5 might be able to eke out a 20% performance advantage for the RTX 4070 over the RTX 3070, on a pretty decent, modern mid-range CPU, there's effectively zero difference in performance at 1080p when running modern triple-A games.

For older titles, or titles where the CPU is not a bottleneck, we are looking at that expected 25 - 30 % performance increase and that's acceptable.

Going back to those CPU-limited titles: at higher resolutions and in situations where the 8 GB of VRAM is limiting the performance (for instance, if I bumped up the settings to the very maximum). there will be a more noticeable performance uplift, even on the i5-12400. However, it seems like the better choice is to invest in a faster CPU than a faster GPU as a mid-range system isn't going to get the best performance out of that graphics card!

In the next entry in this series, I will explore the differences on my AMD system and compare against the RX 6800... So, stay tuned!

3 comments:

"However, it seems like the better choice is to invest in a faster CPU than a faster GPU" hmmm, would be interesting to see how something like a 13700 changed your 12400 results.

To be honest, when I made this purchase I was torn between going with a 13400 + B760 + DDR5 32GB platform or this RTX 4070.... Was considering looking at platform uplift instead.

No way I can afford a new CPU now, so that's on the backburner. Maybe if 14th gen is socket compatible, though!

From every leak I've seen I suspect there is zero chance of that. MAYBE if the "ultra" 13th gen rumors for mid-late 2023 are true though, "regular" 13th-gen will see clearance prices....

Post a Comment